Role: UX Researcher & Interaction Designer

What I owned: Trust framework development, conversation flow redesign, ethical analysis, ideation facilitation

Focus: Trust in AI, conversational design for vulnerable users, ethics of anthropomorphism in public services

Project type: Government AI service — municipality digital inclusion initiative

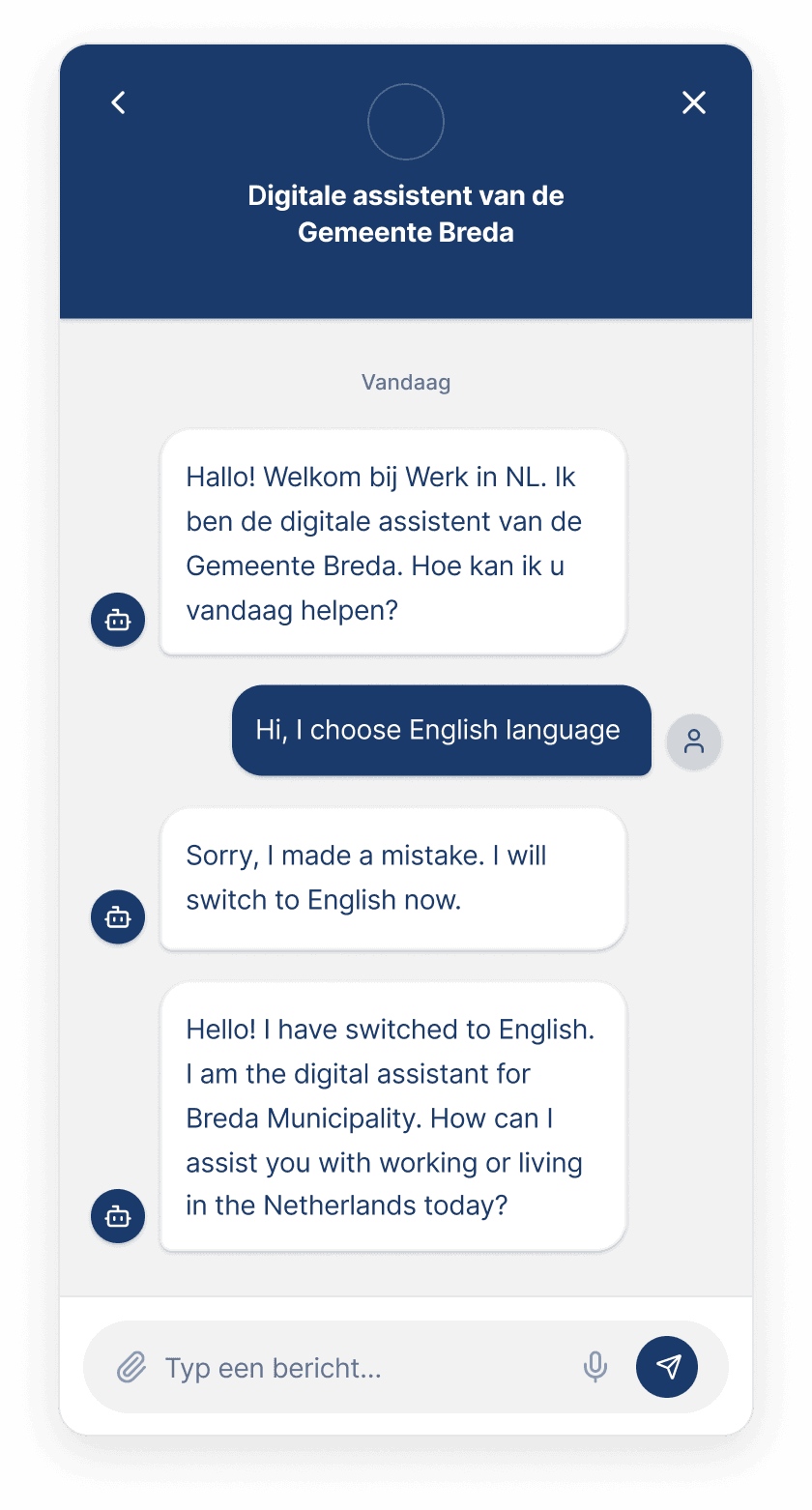

I started by auditing the existing avatar experience across every touchpoint, the kiosk environment, the conversation flow, the visual design, and how it handled errors. Instead of evaluating what worked, I mapped what was actively breaking trust. Four barriers emerged: the avatar's uncanny-valley appearance produced discomfort rather than connection, it opened in Dutch then switched languages after user confusion, the public kiosk setting prevented people from asking sensitive questions, and the conversation dumped large blocks of information instead of guiding users step by step.

No single trust theory captured all of these problems, so I built a custom framework combining three: McKnight et al. (2011) for functional trust in technology, Sucameli (2021) for the emotional calibration between humanizing and dismissing AI, and de Visser et al. (2016) for how trust can be repaired after system failures. In parallel, I wrote a critical ethical analysis examining the risks of deploying hyper-realistic AI for vulnerable populations — arguing that a MetaHuman's friendly face exploits cognitive biases, obscures government accountability, and risks turning migrants into passive data subjects rather than empowered citizens.

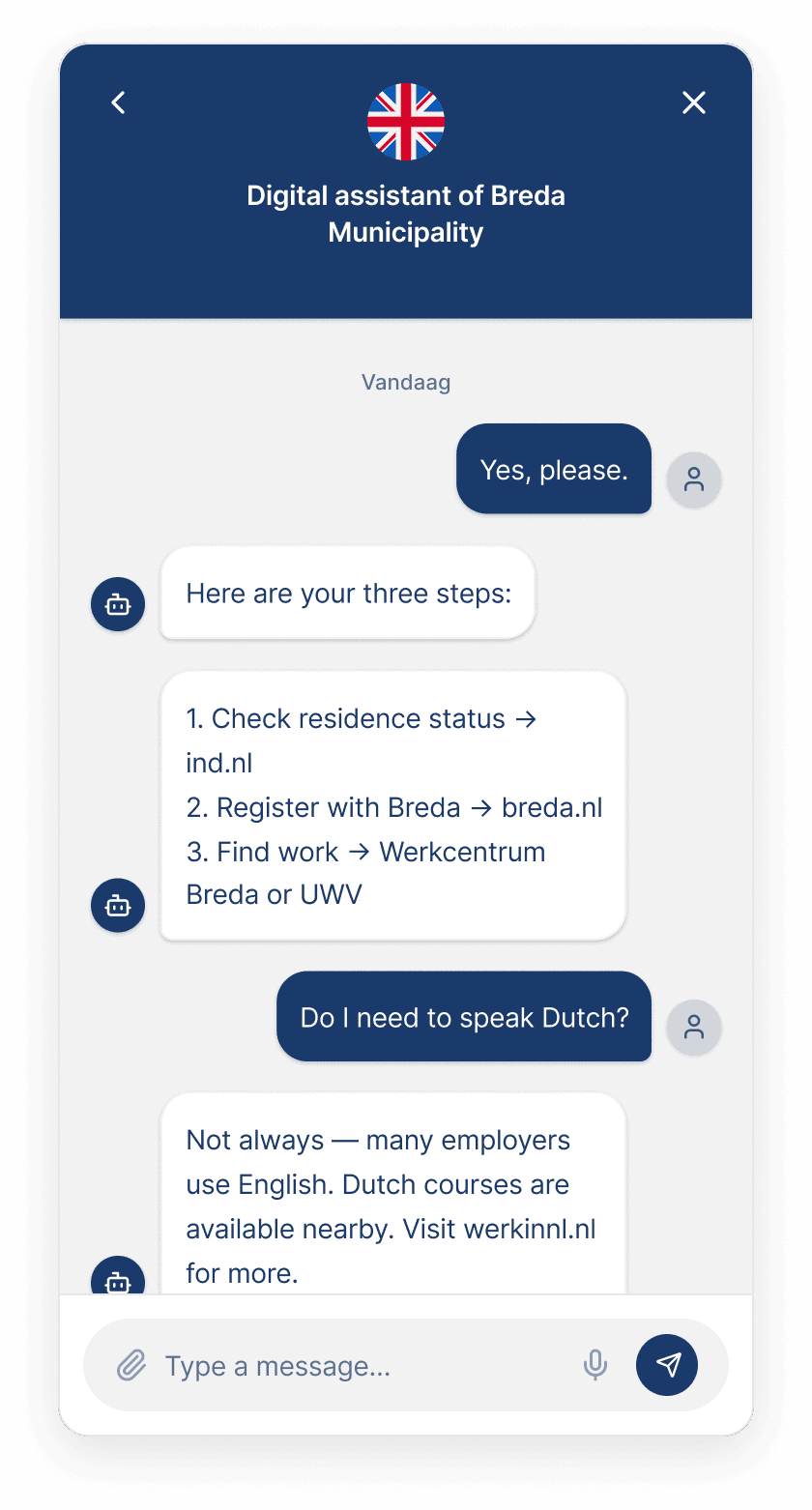

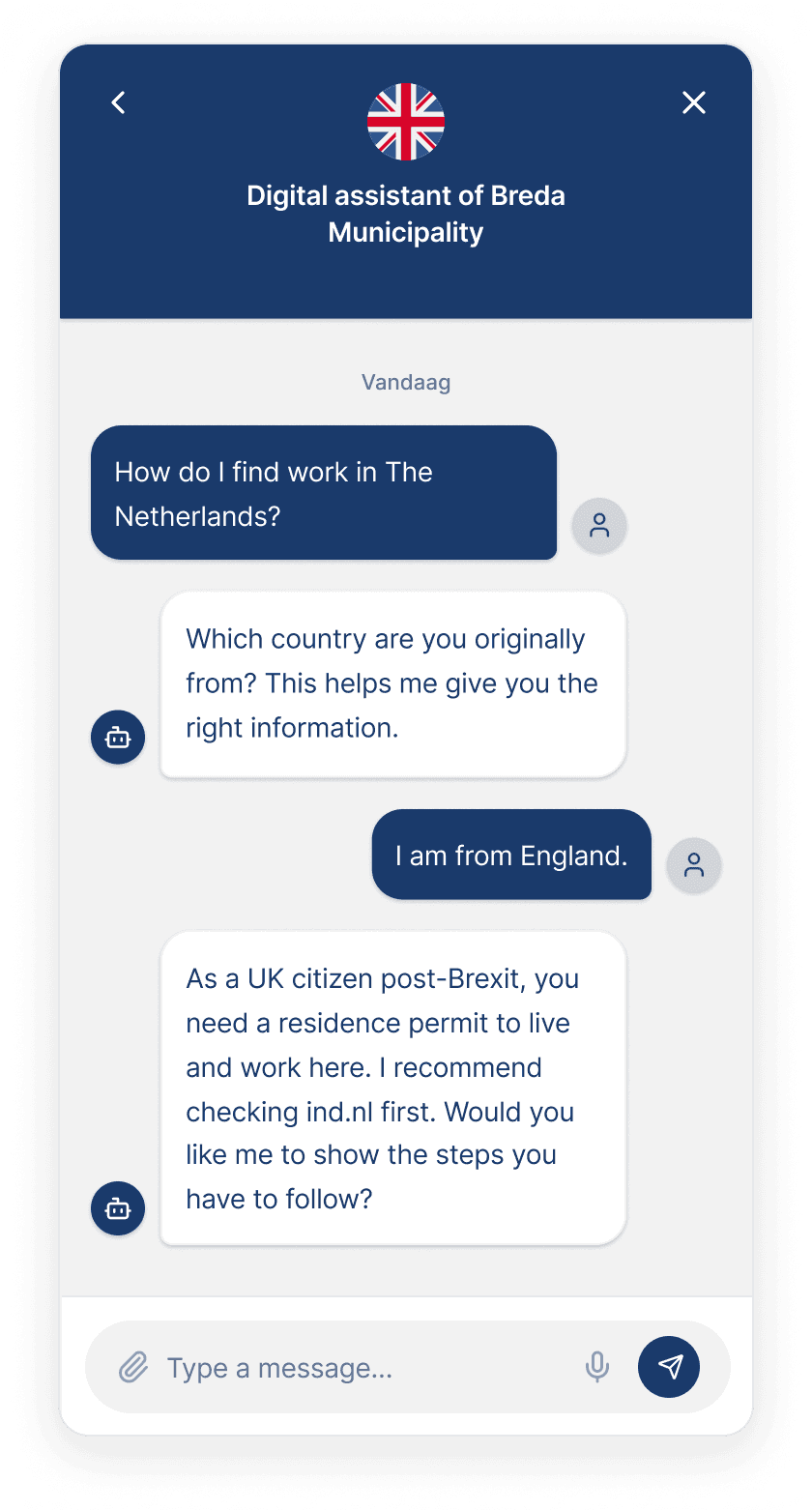

I redesigned the conversation flow so that every screen structurally earns trust rather than assuming it. The language selector now pre-selects English with a visible border, reducing cognitive load. The avatar speaks the selected language from the first message, no confusing switch. It asks for the user's nationality before giving legal advice, ensuring accuracy over speed. Complex procedures are broken into numbered steps with links to official government sources, and two reply buttons at each stage give users control over the conversation direction without overwhelming them with unsolicited information.

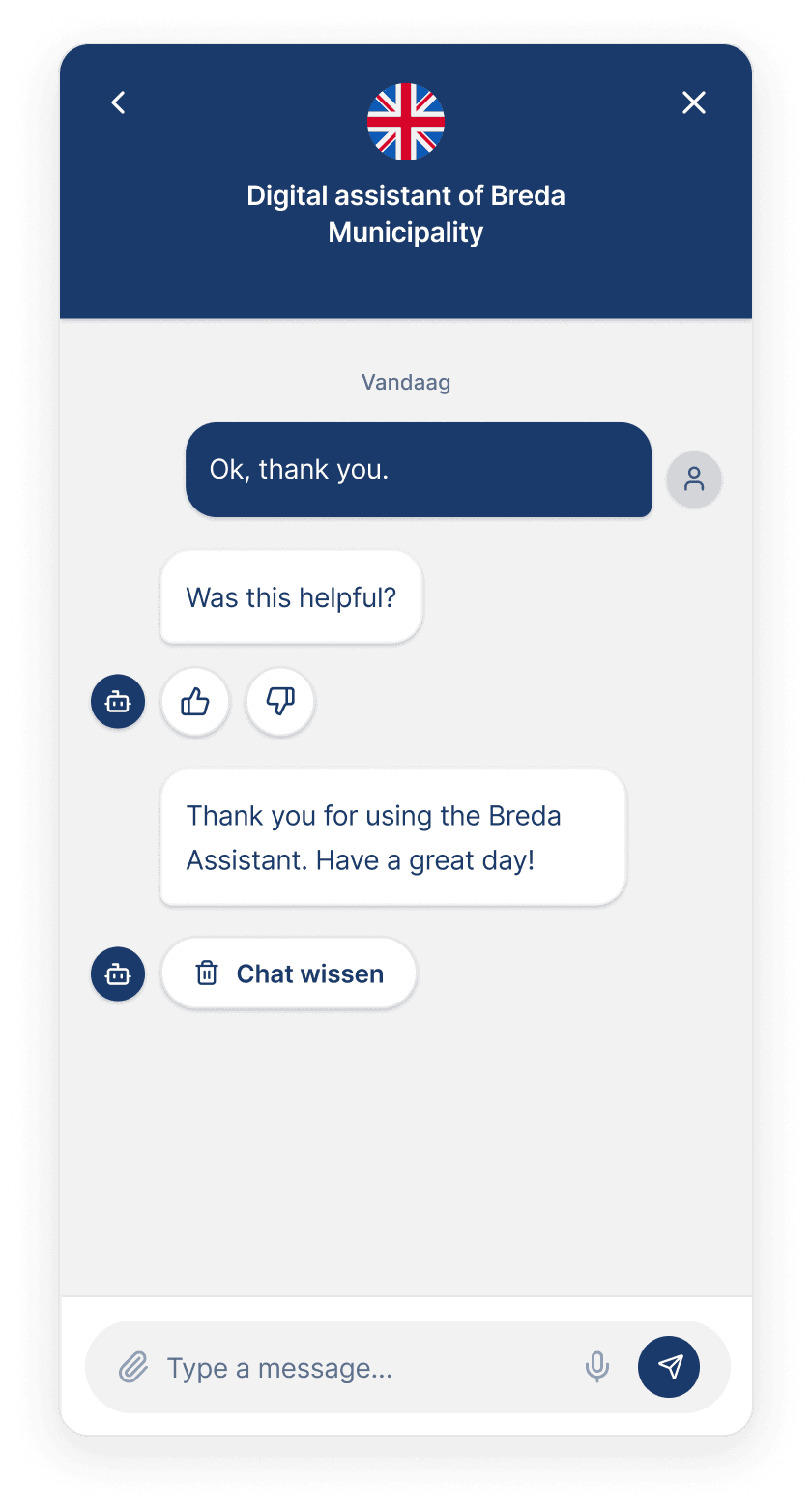

The platform itself shifted from a fixed public kiosk to a web application accessible on any device, removing the privacy barrier and enabling 24/7 access. For error recovery, the avatar explicitly takes responsibility, stating "Sorry, that was my mistake", followed by a flag-based visual fallback where users tap a country flag to hear their language spoken aloud, requiring no reading ability. The closing screen offers the option to erase the entire chat, addressing privacy concerns that are critical for a government service used by vulnerable populations.

These shifts translated into measurable improvements:

Trust barriers identified and resolved through the redesigned conversation flow

Academic trust theories synthesized into one actionable design framework

Access expanded from public kiosk to private web application on any device

Avatar design direction shifted from hyper-realistic to trust-centered